Generating design systems using deep learning

How do you enable people to easily design beautiful mobile and web apps when they have no design background?

“Easy: you just throw some neural networks at the problem!”

“Well, kinda”

You might have heard of Uizard already through our early machine learning research pix2code or our technology for turning hand-drawn sketches into interactive app prototypes.

Today, I would like to talk about one of our new AI-driven features: automatic theme generation.

Design is hard for non-designers

Over time and throughout our private beta program, the majority of our recurrent active users started to increasingly become startup founders, product managers, consultants, business analysts, and marketing teams. We’ve also seen traction among user experience (UX) professionals and user researchers, who typically work closely with design teams but aren’t trained as graphic / UI designers themselves. Put simply, Uizard became a design tool for non-designers.

Uizard evolved to become an easy-to-use design tool where you can create mobile apps, websites, and desktop software screens with drag-and-drop components and templates — instead of pixels and vectors like in the majority of UI design tools. You can also upload hand-drawn wireframe sketches and get them automatically transformed into editable screens. Pretty easy to use!

Because Uizard is a component-based design tool, components are organized as a minimalist design system: the Uizard theme (check this link or this one if you’re curious about atomic design and design systems). Generally speaking, Uizard themes are a means to organize and categorize components, define their colors, typographies, and styling properties. These components (e.g. buttons, labels, input fields) can be assembled into reusable templates (e.g. login form, image gallery, payment section) that can then be used to design screens and apps.

Although Uizard comes with a few pre-made themes, until now it was hard for our non-designer customers to:

- Easily create new themes that would look good given that they don’t have a design background.

- Easily import existing design artifacts into Uizard to make sure that their projects would match their company’s brand identity.

Two of our early adopters mentioned this limitation during our private beta phase: Valentin de Bruyn and Simon Hangaard Hansen (thanks again guys!).

We then asked ourselves: can we use deep learning to generate themes automatically?

Turns out, we can indeed use deep learning and neural networks to do just that! Woohoo! You’ll understand in the section below why machine learning is needed here and why we can’t simply rely on “traditional” software development.

As described in this blog post, we’ve built a system able to extract components, recognize fonts, extract design tokens and extract styling properties (text transform, border radius, padding, font weight, shadow, etc.) from images, URLs, and even Sketch files. This allows our customers to:

- Easily generate original themes from any source of visual inspiration.

- Easily extract components and styling properties from an existing brand identity, style guide, design system.

To the best of our knowledge, this is a world’s first. (Yes, we are pretty proud of our technology, sorry about that…) Adobe has built a tool to extract colors from images, but the system is unable to extract any other information. Someone built a tool to extract design tokens from a URL, but the system is only able to pull out a list of colors, fonts, and spacing information but no fully ready-to-use components.

So how did we make it work?

Generating a design system from an image

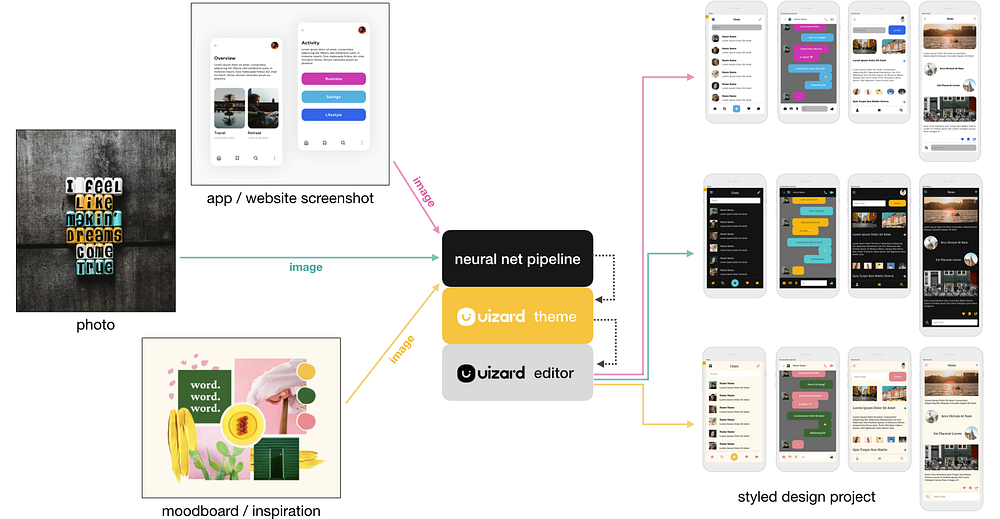

Since we had already developed an entire neural network pipeline for transforming user interface sketches into editable screens, we could reuse some of the building blocks for this new feature. Although a hand-drawn wireframe looks different from a user interface screenshot, both images represent the very same concepts: a button, a body of text, an input field, a footer with icons, etc. Although they look slightly different, both types of image are coming from the same domain.

Our neural network pipeline is trained to process an image as input and identify components, recognize fonts, and extract styling properties (text size, border radius, padding, font weight, shadow, etc.). This enables the creation of a custom-made Uizard theme in seconds by simply uploading a single image.

The resulting model is able to generalize fairly well and doesn’t really care what is shown in the image as long as it’s somewhat design-related.

This means that we can also use the same pipeline to generate design system components from a photo, a moodboard, an inspirational image, or virtually any visual input. The generated themes can then easily be applied to any Uizard project through the design tool editor as illustrated below and as showcased in this video.

Generating a design system from a URL

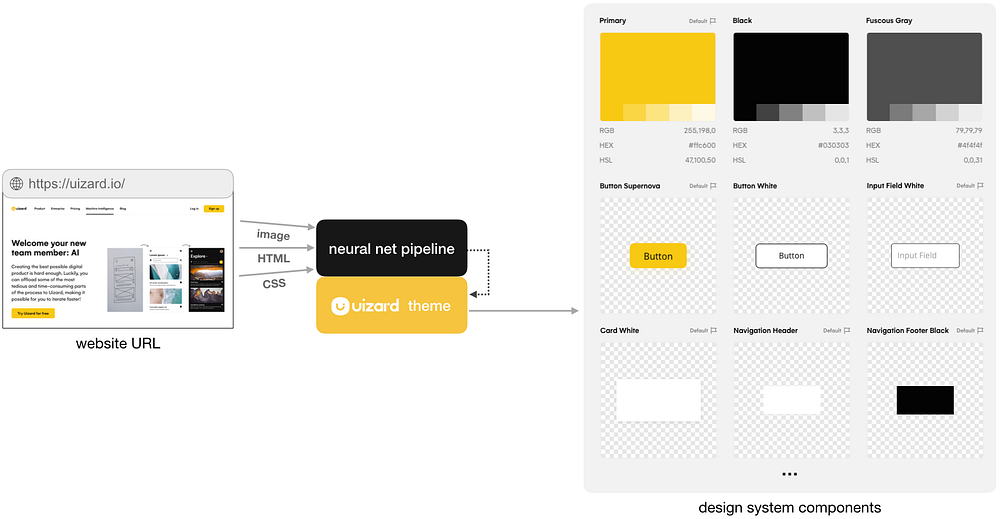

The vast majority of our customers are using Uizard for their daily work and are either working in a startup, in an agency, or in an enterprise. That means that the vast majority of our customers are working in a company with an existing brand identity, style guide, or design system.

It’s pretty crazy to ask everyone to re-create their company design style from scratch when they need to start using a new tool. Although it’s very common in most software to start from scratch, it actually doesn’t make sense when you think about it. Virtually every company in the world has a website or at the very least, a landing page full of design assets. In other words, most companies have design artifacts readily available, encoded in the HTML and CSS of their website.

“Please! Why do we need deep learning for that? Why can’t you just process the raw CSS and HTML?”

“Because NOISE”

There’s no standard for implementing or naming components in HTML. You can create a button using a <div>, an <a>, a <button>, or other tags. It’s therefore practically impossible to naively parse the HTML/CSS of a web page and hope to accurately identify the semantic concepts behind every single element. Machine learning is a powerful tool that can help us here.

Our algorithm first needs to take a screenshot of the website and gather the CSS and HTML files. Our pipeline then processes the website screenshot image and gets access to metadata (i.e. the HTML/CSS files) to make better and more accurate predictions as highlighted in the image below. If and when errors occur — because no single piece of technology has ever been flawless — everything is editable and adjustable in the Uizard platform. Check out this demo video to see this feature in action.

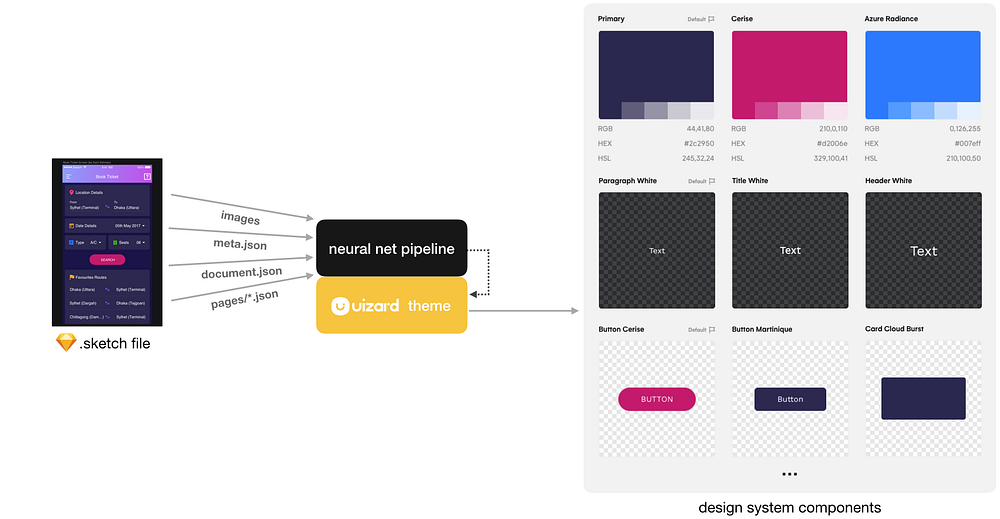

Generating a design system from a Sketch file

Sketch is one of the most popular vector-based digital design tools for expert user interface designers. Although other tools are available out there, Sketch has historically been the most popular solution used by design teams. It’s therefore very likely that most companies have Sketch files laying around even if they transitioned to another design tool.

“Come on, that’s a pretty easy task! Everything is probably named and well-organized in a Sketch file, right? Right?”

“NOPE”

To begin with, designers are free to name layers and organize their Sketch file the way they want. Most importantly, Sketch is a vector-based software, so there’s no such a thing as a “button” or an “input field”. Everything is made of shapes which are made of vectors. There’s no semantic information available and no naming convention. You can probably see the commonality with the problem we discussed earlier for extracting components and styling properties from HTML/CSS files.

A Sketch file is basically a zip dump with a bunch of json files and images. One of these images is a preview of the file content as it would be rendered by the Sketch runtime. We can therefore feed our engine as we did for the URL-based theme generator: by giving our neural net pipeline both the preview image and access to all available metadata to adjust predictions.

This works (surprisingly) well! This technique could be extended and used as a generalized framework to process files from other vector-based design tools such as Figma or Adobe XD. You can check out this demo video to see how it works in practice.

One of the main limitations of this technique is that the preview image can only have a maximum size of 2048 pixels on its largest side. That means that for very large Sketch projects with a lot of screens, the fine details in the preview image are highly pixelated which can lead to inaccurate predictions. Luckily, everything is editable and adjustable in the Uizard platform!

Parting words

Needless to say, we are pretty proud of our theme generator technology! You can try it for free at https://uizard.io.

Of course, the Uizard team is not just me! Kudos to Javier Fuentes Alonso, the lead research engineer behind our theme generators, James Monk and Arturo Arranz Mateo from the machine intelligence team, Ioannis Sintos, our MLOps / DevOps magician, Mathias Vielwerth and Henrik Haugbølle for the sleek API between our machine learning models and the Uizard platform.

Thanks for reading and make sure to follow me, Javier, and Uizard on Twitter if you are interested in machine learning applied to product design and software development.

We have a lot more coming on the AI front, so stay tuned! 😊